Finding the Hidden Gems in Your Data: An IoT Demo

By

David G. Simmons

updated December 14, 2025

Product

Use Cases

Developer

Navigate to:

Being the New IoT Guy at InfluxData, it was sort of a given that I’d have to create some sort of large-scale IoT demo using the InfluxData stack. It just had to happen, and now it has. I’ll give you a complete rundown of what I built, how I built it, and why it matters. Finally, you’ll see why visualizing your IoT data can give you fresh insights to things you’d otherwise miss in your data.

What I Built

Apparently before I joined InfluxData there had been some discussion about building such a demo, but no one really pursued it. We are a fairly remote-worker oriented company, so I thought I’d build a demo that I could distribute throughout the company and let the remote workers (like me) participate too. I wanted to monitor some things that were pretty easy to sense, and would be universally present in everyone’s environment. I settled on Temperature, Atmospheric Pressure, Humidity, and Light. Easy to do. For the actual sensor platform, I chose the Photon from Particle.io. I’ve worked a fair amount with them in the past and it’s pretty easy to get something up and running fairly quickly.

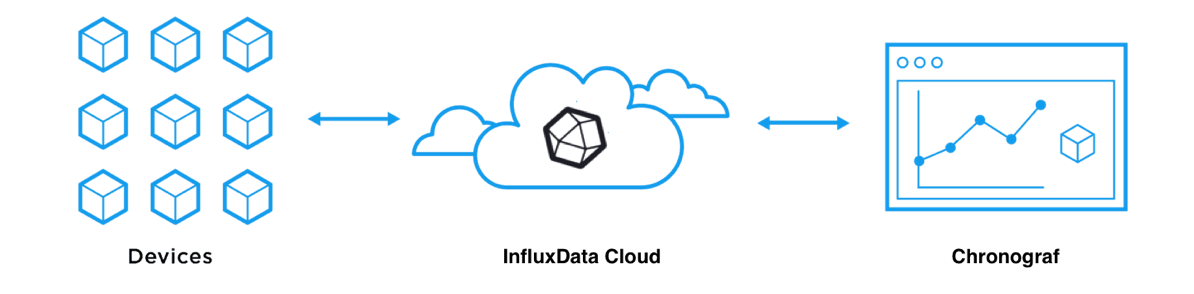

Generally, the architecture for a Particle.io IoT project is as follows:

Your Particle devices speak directly to the Particle cloud, and then your applications get their data from the Particle cloud. This really wasn’t what I was looking to do since I obviously wanted to use the InfluxData stack to collect and analyze the data. So my architecture looks like this:

Yes, it looks identical. But rather than using the Particle cloud, I’m using the InfluxData Cloud to collect the data, and Chronograf to build a dashboard for visualization. Subtle, but important, difference to be sure. One of the things that it gives us is the ability to persist data, and to look at historical data, which I like. Trend analysis is important in IoT data analysis, and this gives me that very easily.

That’s the high level. Now, to dive a little deeper, we’ll look at how I built it.

How I Built It

First, I researched and collected the sensors I wanted, and all the other parts I would need. Here are the specific parts:

- Particle Photon the sensor node

- BME280 breakout board from Adafruit the temperature, pressure and humidity sensor

- TSL2561 breakout board from Adafruit the light (visible and infrared, as well as LUX) sensor

- USB-C Wall power units

- Project Boxes

- Wire, a drill bit, rubber grommets, and hot glue

Building the Hardware

If you don’t want to put them in nice boxes, you can skip those last 2 items, of course, but since I am distributing them across the company, I thought they should look presentable, and be fairly secure. Now on to the build.

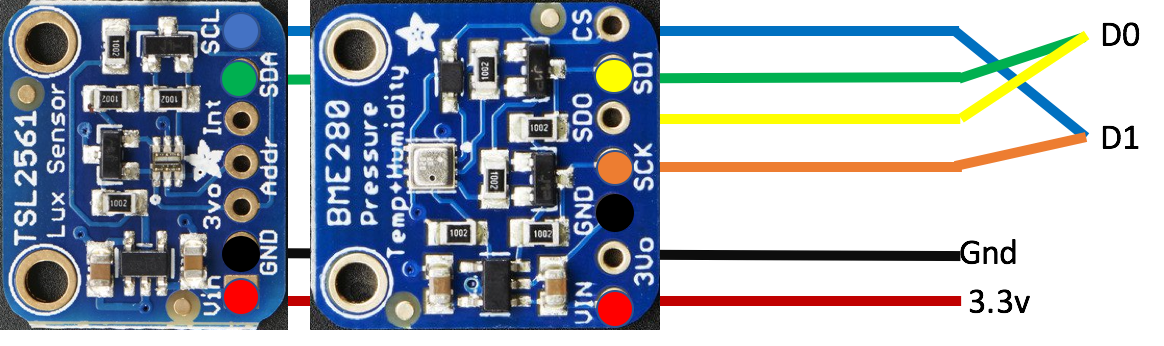

That’s the time-lapse of me building everything except the sensors. The camera malfunctioned during the sensor-build, so we lost that part. Since the sensors are both I2C-based sensors, I was able to build a simple ribbon-cable to connect everything:

As you can see, it only uses two input pins (D0 and D1), the 3.3v supply line and ground. Each of the sensors has a different I2C address by default, so they can be tied to the same pins and addressed separately. The TSL2561 actually has 3 addresses depending on if you pull it high, pull it low, or leave it floatingthe default, which is what I used since it didn’t conflict with the BME280 address.

#define TSL2561_ADDR_FLOAT (0x39)

#define BME280_ADDRESS (0x77)All that was left was to solder the sensor rig to the Photon itself and the sensors were ready to go!

Packaging was straightforward as well. I simply drilled a 1/2” hole in the side of each project box, placed a 9/32” rubber grommet (purchased at Home Depot for $0.97 each) over the USB-C connector, and secured it in the hole. A little hot glue to hold the sensor rig in place, and I was done. Neatly packaged sensors ready to ship!

But wait, I didn’t install any software anywhere! Correct! One of the nice things about the Particle.io platform is the ability to push firmware over the air (OTA). I could build the software in the Particle IDE, and then, when each sensor came online, it would automatically get the right firmware and start running. If I need to make any changes to the firmware later on, any changes are automatically written to all the devices via the OTA mechanism, and everything stays up to date.

Building the Software

The Particle Photon is easily programmed using either their online IDE (Web-based) or their Desktop IDE. The two sensors I chose are supported by a couple of libraries that are simply included with your program. Adafruit_TSL2561_U (2.0.10) and Adafruit_BME280 (1.1.4) which you can easily include using the library manager. You’ll also need to include the HttpClient (0.0.5) library, at least until I finish writing the InfluxData Arduino/Particle library.

So, to get the easy stuff out of the way, we of course need to include the libraries mentioned above, and define a couple of things to start with.

#include <HttpClient.h>

#include "Adafruit_Sensor.h"

#include "Adafruit_BME280.h"

#define SEALEVELPRESSURE_HPA (1013.25)

PRODUCT_ID(XXXX); //This is the Particle.io product number

PRODUCT_VERSION(1);

Adafruit_BME280 bme;

Adafruit_TSL2561_Unified tsl = Adafruit_TSL2561_Unified(TSL2561_ADDR_FLOAT, 12345);

double temperature = 0.00;

double pressure = 0.00;

double altitude = 0.00;

double humidity = 0.00;

uint16_t broadband = 0;

uint16_t infrared = 0;

double lux = 0.00;

String myID = System.deviceID();

String myName = "";

HttpClient http;

http_header_t headers[] = {

{ "Accept" , "*/*"},

{ "User-agent", "Particle HttpClient"},

{ NULL, NULL } // NOTE: Always terminate headers will NULL

};

http_request_t request;

http_response_t response;

uint16_t ir_light;

uint16_t viz_light;Most of those variables are self-explanatory. The myName one, however, is a little tricky. On the Particle platform, each device can be assigned a ‘name’ that is unique. This name, however, doesn’t live on the device itself, but rather in the Particle Cloud. It then has to be retrieved from the cloud before it can be used. Since we aren’t using GPS to locate these devices, we decided to set the ‘name’ to a location, like “NewYorkNY” or “ClevelandOH” etc. This lets us know roughly where the device is, but keeps its actual location (which may be an employee’s home) obfuscated. There’s a trick to getting the name:

void setup() {

...

Particle.subscribe("spark/device/name", handler);

...

}

void handler(const char *topic, const char *data) {

myName = String(data);

}You have to subscribe to the name property, and set a call-back handler. As soon as the name is set, or the device comes online after its name has already been set, this call-back handler is called and we can get the name. I won’t start sending the data until the device name/location is retrieved so as to keep the data consistent in the InfluxDB instance. Speaking of the InfluxDB instance, also in that setup() function I need to initialize the http_request_t object and set some of its parameters correctly:

request.hostname = "myhost.com";

request.port = 8086;

request.path = "/write?db=iotdata";Now that all of that setup is done, all that needs to be done is enter a loop that collects all the sensor readings, formats them in line-protocol for InfluxDB, and then send them.

void loop() {

getReadings();

double fTemp = temperature * 9/5 + 32;

if(myName != ""){

request.body = String::format("influxdata_sensors,id=%s,location=%s temp_c=%f,temp_f=%f,humidity=%f,pressure=%f,altitude=%f,broadband=%d,infrared=%d,lux=%f", myID.c_str(), myName.c_str(), temperature, fTemp, humidity, pressure, altitude, broadband, infrared, lux);

http.post(request, response, headers);

delay(500);

} else {

delay(5000);

}

}The BME280 only returns temperature data in ºC, so in order to have both, I do a quick conversation of the temperature to ºF. Again, I won’t send data until I’ve retrieved the device name/location, but as long as I have that, I’ll continue to send readings every 500ms. Do I really need 2 readings per second? No, probably not. But I’m deploying a dozen of these, so we’ll be sending 24 readings/second to the InfluxDB instance just to give it something to do.

The design of my databaseand hence the layout of the line-protocol http POST abovewas designed so that we could collect all the sensor data per-location, and by sensor ID. We think this will make it easier to dive into the data based on location to find interesting events while still allowing us to see overall trends.

Why It Matters

It's amazing what you can discover when you can actually see your data!

Being able to send IoT data to InfluxDB easily and efficiently is a huge win. IoT data is time series data. By definition. Why do I say that? Because IoT data is <sensor_reading>@<time>. That is time series. Being able to easily, efficiently and quickly bring IoT data online is essential to the success of an IoT deployment.

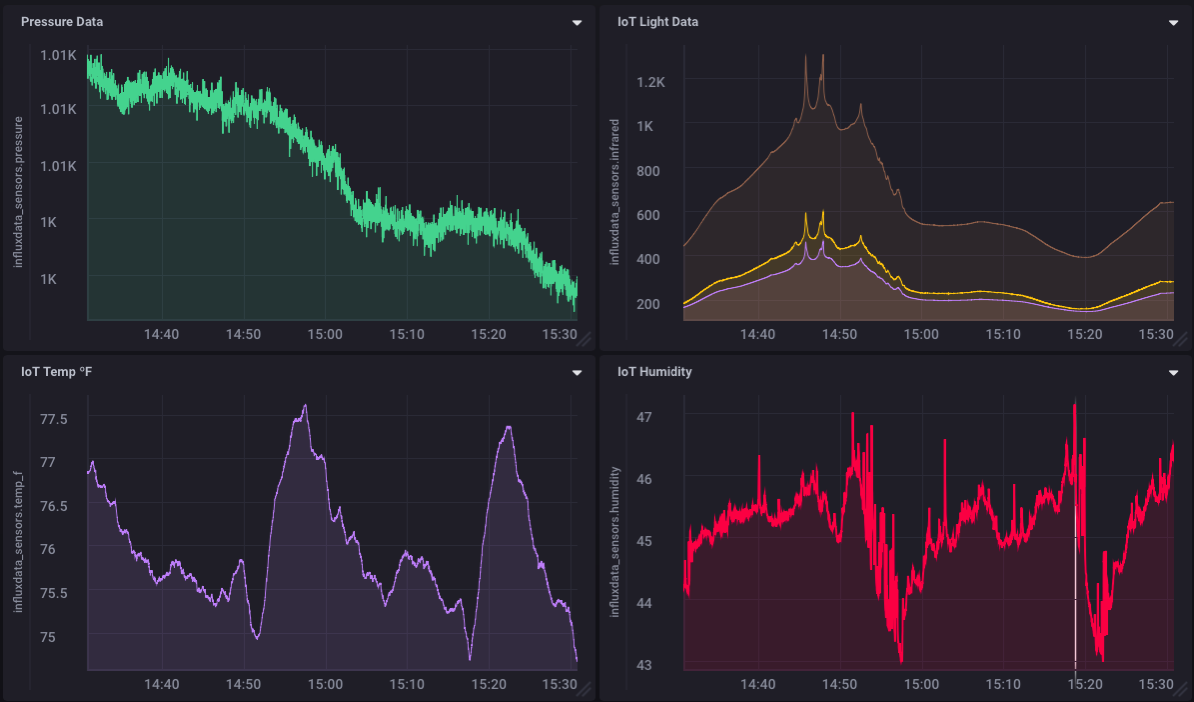

Once I had the sensor on line (I’m still shipping the others around to come online, so the data will get better, and more complex) it took all of about 3 minutes to build the dashboard so that I could see what was going on.

You’ll notice that the pressure has been steadily dropping which, correctly, indicates that a storm is approaching. The light data corroborates this as clouds were indeed moving in. As I was looking at the graphs I was a little confused by the temperature and humidity plots. Why was the temperature slowly dropping to 74º, then spiking back to 77º? As I was watching this and pondering it, my central air conditioning kicked on and I watched as the temperature and humidity dropped. So while I was not explicitly monitoring my AC unit, it turns out I can effectively monitor when it cycles on and off just by the temperature and humidity graphs on my dashboard. It’s amazing what you can discover when you can actually see your data! Are there unexpected gems hidden in your data that you’re simply not aware of because you can’t see them?

And this is why it matters what you do with your IoT Data. It’s “Aha!” moments like these that can lead to changes in business processes that ultimately result in greater efficiencies and huge cost savings.

Keep coming back for update on this IoT demo as we grow it and roll it out further as a showcase for using InfluxData in IoT deployments. The entire demo will be running in the InfluxDB Cloud and we’re planning to make some access to this data public so that you can access, view and interpret the data yourself.